Are we actually ready for scale?

A diagnostic for founders, CEOs and boards at Series B / pre-investment

Scaling is intoxicating. Investors talk about growth, GTM efficiency and network effects; product teams dream in new features; marketing plans promise explosive reach. But the uncomfortable truth what keeps CEOs and boards awake is whether your digital estate can carry that ambition without collapsing under its own weight.

Why the question matters

At Series B and in pre-investment diligence, growth expectations become binary: either your platform can reliably convert more users and serve them at lower marginal cost, or the business is adding churn and technical overhead faster than revenue. Investors often under-estimate digital risk because the surface metrics look healthy traffic, downloads, ARR while the underlying systems and processes are fragile.

Scaling without repairing these weaknesses multiplies three costs:

- Acquisition waste — new users are lost through broken journeys or inconsistent UX.

- Operational drag — platform debt increases maintenance and slows roadmap velocity.

- Value leakage — poor measurement, fragmented Martech and data gaps mean you can’t prove unit economics.

So the right frame is not “can we build more features?” but “can the business survive added volume, complexity and scrutiny?”

Four domains that decide scale readiness

Any credible readiness check focuses on four places where scale fractures first.

1. Platform health and platform debt

Look for brittle integrations, sprawling undocumented APIs, low automated test coverage and ad-hoc scripts that keep things running. Key signals:

- High mean time to recover (MTTR) for incidents.

- Large, coupling-heavy modules that cannot be deployed independently.

- Production hot-fixes more common than planned releases.

2. User experience and journey integrity

Fragmented UX isn’t an aesthetic problem — it’s a conversion and trust issue. Signs of trouble:

- Multiple ways to do the same thing with inconsistent outcomes.

- Unknown or high friction in key flows (signup, onboarding, payment).

- Accessibility, localisation and device gaps.

3. Martech, measurement & insight gaps

Growth stops being repeatable when you can’t measure what matters. Watch out for:

- No single customer view; identity stitching is ad-hoc.

- Tagging chaos in tag managers and duplicated events.

- Disconnected attribution and cohort analysis.

4. Governance, risk and hidden dependencies

These are often invisible until they fail. Examples:

- Third-party vendors critical to revenue with no SLAs.

- No documented incident playbooks, backups or runbooks.

- Unverified compliance (GDPR, PCI) in customer-facing flows.

The readiness checklist investors will reward

Score each item 0–3 (0 = not present, 3 = mature). Total out of 30.

- Critical flow availability — monitored and paged for (signup, checkout, auth).

- Automated testing — unit, integration and end-to-end coverage for core flows.

- Release safety — CI/CD with canary/blue-green deployments and rollback.

- Observability — structured logs, tracing, SLOs and dashboarding for core metrics.

- Data quality & single customer view — identity resolution and reliable user events.

- Martech hygiene — single tag manager, versioned events, documented mapping.

- UX consistency — documented design system and shared component library.

- Security & compliance — pen tests, PCI/GDPR posture, dependency scanning.

- Dependency map — inventory of third-party services and critical SLAs.

- Operational maturity — incident runbooks, run rate for bugs, and on-call readiness.

Interpretation:

0–9 = Red: Significant structural deficits. Pause scale; prioritise stabilisation.

10–20 = Amber: Partial readiness. Tactical fixes will help, but risk remains.

21–30 = Green: Low digital risk for immediate scale; consider targeted hardening.

Why scaling before fixing foundations destroys ROI

A short example: if you boost acquisition spend by 30% but conversion through checkout falls by 10% because of a fragile payment integration, your marginal cost per paying customer rises. Add in the operational cost of firefighting and the delay to ship core retention work, and the ROI on that acquisition spends collapses.

Fixing foundations is not an expensive luxury — it is protecting the multiple investors who are buying. It reduces churn, increases conversion and shortens the runway for new initiatives. In short: the safest way to scale revenue sustainably is to shrink the denominator of waste before you expand the numerator of growth spend.

From MVP to scale: what breaks first (and how to triage)

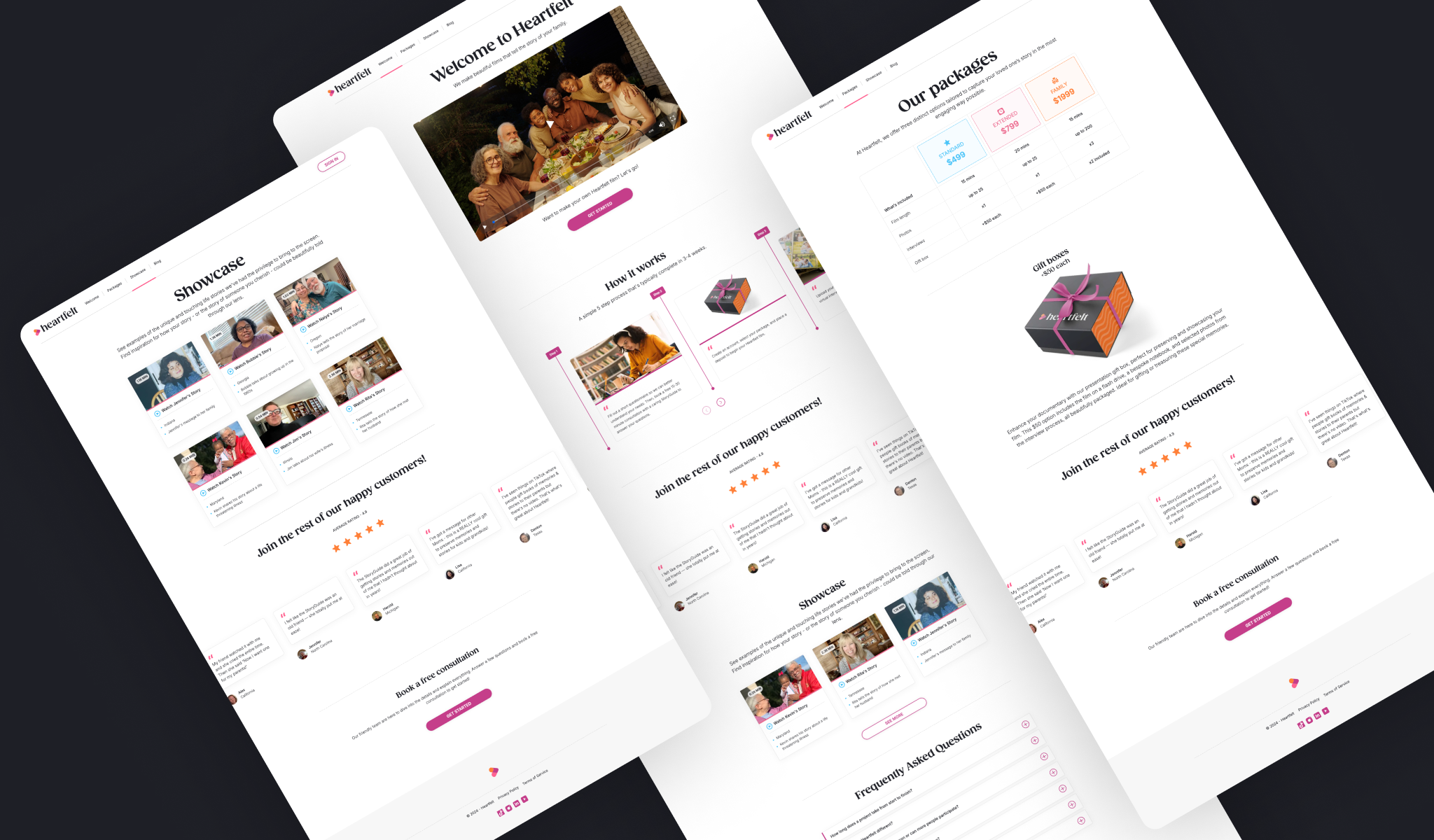

In our work helping teams scale digital ecosystems — including projects like Heartfelt.film, where growth depended on connecting experience, data and platform foundations — we see a common failure sequence emerge in fast-growing digital estates:

Typical failure sequence in fast-growing digital estates:

- Identity & billing — authentication, session handling and payment edge-cases surface first.

- Search, cache & performance — increases in load reveal caching or search bottlenecks.

- Analytics integrity — events get lost or double-counted when traffic rises.

- Third-party rate limits — external dependencies fail under real traffic patterns.

- Fragmented UX — new features patched onto old flows create inconsistent experiences.

Triage approach: stop new feature launches for 1–2 sprints; stabilise critical flows; harden infra and add observability; then resume growth with controlled experiments.

A practical three-week diagnostic (what to do now)

Week 1 — Rapid audit (executive one-pager)

- Run the 10-item checklist and collect evidence (screenshots of dashboards, key incident logs, event samples).

- Map top 5 user journeys and identify failure modes.

Week 2 — Quick wins

- Lock down tagging, restore single customer view for the last 30 days.

- Harden payment and auth flows with focused reliability tests.

- Add basic SLOs for the three most critical endpoints.

Week 3 — Roadmap & de-risk

- Produce a 90-day stabilisation roadmap: platform debt reductions, UX fixes, and a Martech clean-up.

- Quantify expected ROI from each stabilisation (reduced churn, improved conversion).

This diagnostic produces a single-page readiness rating for the board and a one-page plan for investors.

If you’d like support running this as a low-risk pilot, Polar can deliver the diagnostic end-to-end with your product, engineering and growth teams — giving you clear evidence of readiness and a practical plan to de-risk the next phase of growth. Get in touch to discuss a pilot.

The questions investors don’t ask — but absolutely should

Investors often ask about ARR and growth metrics; these are necessary but not sufficient. The missing questions are:

- What is the true cost to serve a new user in production?

- Do you have a reliable single customer view for cohort analysis?

- What vendor would you lose first if it became unavailable?

- How long to restore service for critical flows and why?

- Which metrics are synthetic (prone to error) versus reliable?

Answering these gives confidence beyond PR numbers.

Final word: frame your move to scale as risk-reduction, not delivery

Scaling is an act of trust: you ask investors to trust your infrastructure, customers to trust your product, and your team to trust your processes. Treat the digital estate as a risk portfolio. The smartest move is to de-risk critical flows first, then accelerate.

If you want a focused, board-level one-page readiness report and a three-week de-risk roadmap, start with the checklist above and quantify the top three risks by potential revenue impact. That single sheet will change the conversation from “can we grow?” to “how do we grow safely?”

Polar’s point of view is simple: make sure your platform is flawless under fire — not because you want perfection, but because the economics of scale punish brittle systems.

If you’d like a quick benchmark before you dive deeper, take the Polar Growth Stress Test. It’s a short diagnostic designed to highlight where your digital ecosystem is most likely to break under Series B-level scale.